In the intricate world of audio visual (AV) music licensing and royalties, cue sheets are the backbone, connecting music creators to their rightful income. Think of them as the detailed logs of every music usage in films, TV shows, and even online videos. Cue sheets are the vital document that ensure composers, artists, and musicians receive the royalties they deserve, based on how and where their music is played.

In the words of Cathy Meranda of Sony Music Publishing, no cue sheet = no money.

💡 What is a cue sheet?

A cue sheet is a document that lists all the music used in a film, television program, or other audiovisual production.

Performing Rights Organizations use them to track the use of music in films and TV. Without cue sheets, it is nearly impossible for composers and publishers to be paid for their work.

Historically, it was a sheet of paper, a ledger of sorts. Today, it is usually a spreadsheet, but newer cue sheets make use of technology to host them in the cloud.

Their vital nature means that cue sheets shouldn’t be viewed as just paperwork – they’re directly tied to revenue. Inaccurate or missing cue sheets translate to lost income, legal headaches, and damaged relationships with composers and rights organizations.

Cue sheets are a composer’s lifeline to getting paid. Just ask Ginia Eady-Marshall at Disney. By ensuring Disney’s cue sheets were accurately processed, she ran the numbers and boosted music royalty income by 12% in 2018. That’s a significant pay raise for triple-checking every field of each cue sheet for minor inconsistencies or gaps in information.

As music usage explodes and diversifies across platforms, cue sheets become essential for tracking its increasingly complex journey.This blog post, draws on the expertise from Orfium’s panel at the 2024 Production Music Conference, Your Music, Your Money: Cue Sheet 101 to Maximize Music Royalties, with insights, advice and knowledge from:

- Ginia Eady-Marshall, The Walt Disney Company

- Jacob Yoffee, Composer

- Cathy Merenda, Sony Music Publishing

- Mark Vermaat, Orfium

Top 10 Cue Sheet Tips and Best Practices

1. Prioritize Cue Sheet Precision: Accuracy is King

Inaccurate cue sheets directly result in lost revenue for everyone involved. Think of it as money left on the table, plus the potential for legal issues and strained relationships.

“Cue sheets are a legal document in the music ecosystem, so it’s vital that they’re right the first time round.” – Cathy Merenda, Sony Music Publishing

Top Tip for Production Music Companies

Make quality control a religion. Double-check every cue sheet before it’s submitted, focusing on timings, usage descriptions, and composer/publisher details (including minute details such as capitalization, spelling and capitalization). At a bare minimum, double and triple check:

- Entitled/Interested Parties

- Composers

- Publishers

- Song Writers

- Cue name

- How the music was used (Is it a visual, vocal, maintitle, background instrumental etc.)

With so many fields and such a high level of detail required, you may want to consider software tools to automate parts of this process and reduce human error.

Top Tip for Composers

Ditch the poetic track titles when it comes to cue sheets. Instead, opt for clear, descriptive titles that include the production name and episode or scene information.

Why? It makes it easier for everyone involved to identify your music and ensure you get paid accurately, especially when working internationally or with production companies dealing with high volumes of creations and music.

Save the creativity for your music, not your cue sheet titles!

“Avoid overly poetic titles on your cue sheets. Production music libraries handle tons of tracks, so make it easy for them to identify yours with clear, concise titles. Think ‘Main Title – Episode 3: The Heist’ instead of ‘Cosmic Dance.’ It helps them when they’re cross-checking the finer details.” – Jacob Yoffee, Composer

2. Use new technology to make cue sheet creation and management easy and accurate for yourself

Staying current with technology and training your team is key to efficient and accurate cue sheet creation.

Top Tip

Explore cue sheet management platforms like Soundmouse by Orfium. Offer regular training for your staff on best practices, metadata handling, and any new tech developments.

3. Foster Open Dialogue Internally and with Third Parties: Communication is Key

Clear communication between all relevant parties, from Production Music Company composers, editors, and production teams ensures everyone’s on the same page about music usage.

Top Tip for Production Music Companies

Establish easy communication channels for feedback throughout production. Encourage composers to be proactive about their music’s usage tracking by highlighting the importance of cue sheets. Provide user-friendly templates for cue sheet submission, tailored to the specific PRO they need to be delivered to.

Top Tip for Composers

Don’t be afraid to get in contact and chase down your cue sheets as well as all your other paperwork. Even if your music is under a blanket license through a Production Music Company.

“If you license your music into a production, you should receive a full copy of the license, your payment and your cue sheet. If you don’t get all three, you need to chase.” – Ginia Eady-Marshall, The Walt Disney Company

4. Triple Check Identifiers: The Music Industry’s Social Security Numbers

Correct identifiers (IPI/ISWC) ensure the right people get paid, especially internationally where names can get lost in translation.

💡 What is an ISWC?

An ISWC (International Standard Musical Work Code) is an internationally recognised reference number for the identification of musical works.

“Every country has different requirements for cue sheets… You will however have to translate it between different countries, which may or may not have the same requirements on the cue sheet” – Mark Vermatt, Orfium

Without a clear identifier like the ISWC, your royalties could easily get lost in transit.

Top Tip for Production Music Companies

Make IPI/ISWC fields mandatory in your templates. Educate composers and clients on their importance. Consider platforms with automated identifier checks.

Top Tip for Composers:

“If you work with an independent or smaller production company… I would recommend you fill out the cue sheet yourself.” – Jacob Yoffee, Composer

When Composers take ownership of their cue sheets, not only can they ensure accuracy but also strengthen their relationships with production companies. It shows proactivity and attention to detail, which can lead to more opportunities down the line.

5. Automate Cue Sheet Creation With Audio Recognition: Your New Best Friend

For production music libraries, trailers, etc., audio recognition can drastically speed up and improve cue sheet accuracy.

💡What is Audio Recognition for Cue Sheets?

Audio recognition is technology that automatically identifies and lists the music used in videos (TV, Ads, Films, Online etc), making it easier to create accurate records of the songs and their details.

“Your music being used in a YouTube video or an ad halfway around the world is easy to miss. By registering your compositions in a database with audio recognition, you automatically get credited on cue sheets and receive royalties for uses you might never know about. It’s effortless income for the work you’ve already done.” – Mark Vermaat, Orfium

Top Tip for Production Music Companies

Partner with a company offering this service. Make sure your music library is in their recognition database. Consider a hybrid approach, combining manual and automated cue sheet creation. If you’d like to learn more about how Soundmouse at Orfium can help you manage your cue sheet processes, and create cue sheets with Audio Recognition Technology, reach out to our team here.

Top Tip for Composers

Uploading your compositions and tracks to a service with these services can save you the mental and time load of scanning the internet constantly for new usages of your works. If you’d like to upload your music to Soundmouse for Orfium, which can fully automate that for you, get in touch with our team here.

6. Metadata: The Backbone of Royalties

Accurate and complete metadata is crucial. Inaccurate info can lead to “unclaimed” royalties, meaning money that’s rightfully owed is floating in limbo.

💡What is Music Metadata?

Music metadata is like a digital label on a song, containing details like title, artist, and genre. It helps organize music, and makes sure everyone involved gets the right credit.

“Metadata might seem boring, but it can unlock hidden revenue you never expected to get. My music for the Jungle Book trailer unexpectedly took off in Brazil, and I’m still receiving royalties thanks to accurate metadata. Never underestimate its power.” – Jacob Yoffee, Composer

Top Tip for Production Music Companies

Set up a system to collect and verify metadata for every track. Consider AI tools to help with matching and improving metadata quality. Regularly update your metadata to reflect any ownership changes.

7. Stay Sharp: Keep Up with Industry Changes

The AV music industry is constantly evolving. Staying informed helps you avoid legal pitfalls and ensures you’re using best practices. Regulations sometimes change, so cue sheet requirements may as well.

Top Tip for Production Music Companies

Attend industry events, join relevant organizations such as the PMA, sign up to your Collecting Society’s email newsletter, and don’t hesitate to consult legal experts when needed.

8. Empower Your Clients: Rights Holder and Composer Education is Key

If you’re a Production Music Company, making sure your composers are educated on the value and impact of cue sheets on their royalties will encourage them to submit accurate and timely information for their cue sheets, making your life easier.

Top Tip for Production Music Companies

Provide crystal-clear guidelines on cue sheets and metadata. Highlight the importance and impact of accurate and timely cue sheets on their revenues. Consider sharing this article with your rights holders and composers. Be available to answer their questions. If there are any you struggle with, we’re here to help! Reach out to us at hello@orfium.com.

9. Don’t Neglect Online Short-Form

While big productions are important, the countless trailers, promos, and countless shorter online videos and snippets also generate revenue that shouldn’t be ignored.

Top Tip for Production Music Companies

Explore automation (like audio recognition) to handle cue sheets for this content. Work with clients to set clear rules for music use and reporting in short-form projects.

10. Be An Advocate: Fight For Fair Play

Production music companies have a responsibility to ensure their composers get paid fairly. By advocating for transparency and best practices, you help the whole industry thrive.

Top Tip

Get involved in industry discussions on improving cue sheets and royalty distribution. Support organizations protecting music creators’ rights. Encourage open communication between everyone involved in music licensing.

Remember, cue sheets are the lifeblood of the music industry when it comes to all audio-visual content. By taking these steps, you not only protect your revenue but also contribute to a healthier and fairer music ecosystem.If you’re ready to improve your cue sheet management and learn more about the technology mentioned in this article, and how it could help cue sheet processes, contact Orfium today.

Orfium signs global UGC deal with Japanese label Pony Canyon

Pony Canyon Inc., a Japanese entertainment company and leader in the music industry in Japan, has chosen Orfium to help manage and monetize its vast music catalog on YouTube. Pony Canyon works with some of the biggest artists in Japan, including Official Hige Dandism whose song ‘Subtitle’ was the most popular Japanese song on Apple Music and the third most streamed Japanese song on Spotify in 2023. This marks Orfium’s fourth deal in Asia in the past six months.

Pony Canyon Chooses Orfium for Music Catalog Management

In recent years, Pony Canyon has focused on expanding its operations outside of Japan, establishing Pony Canyon USA in 2015. As its global promotional efforts have continued to resonate and feed their success, the audience for Pony Canyon’s music has grown, increasing the need for an effective international UGC monetization partner. Pony Canyon has chosen Orfium as that partner for music catalog management on YouTube, bringing in Orfium’s AI-based matching technology to help track the use of Pony Canyon’s music on user-generated content platforms, ensuring their artists receive the revenue they deserve.

The partnership is key for Pony Canyon as they continue gaining popularity internationally. For Orfium, the deal with Pony Canyon expands its presence in Japan following recent partnerships with Avex, Bandai Namco, Warner Music Japan and JASRAC. In March, Orfium announced that it had also been selected as the music reporting partner of choice for BROMIS, a consortium of major broadcasters and music rights holders in South Korea.

Shinichi Ishii, General Manager of Music Marketing at Pony Canyon, emphasized the importance of this partnership, stating: “With Orfium’s international reach, cutting-edge technology, and proven track record, they are the ideal partner to support our mission of ensuring Japanese music is celebrated and appreciated worldwide.”

Be a part of the journey with Orfium Japan

If you’re interested in our journey and want to explore opportunities with Orfium, get in touch here.

Soundmouse by Orfium has been chosen by the South Korean Broadcast Music Identifying System (BROMIS) as the official music reporting partner. This consortium, led by major broadcasters like KBS, MBC, SBS, and four collecting societies, including KOMCA, KOSCAP, FKMP, and KEPA, represents a significant stride forward in transparency and accuracy within South Korea’s music market. As part of the deal, Soundmouse’s industry-leading music cue sheet reporting and audio recognition fingerprinting technology will now be utilized by 36 broadcasters across 175 TV channels and radio stations in South Korea.

Supported by the South Korean Ministry of Culture, Sports and Tourism and the Korean Copyright Commission, the three-year agreement promises to enhance transparency in music reporting processes and ensure fairer royalty payments to creators and rights holders.

This partnership marks a significant milestone for us at Orfium, as Soundmouse by Orfium is one of the first third-party companies granted access to the Korean Music Database, allowing us to deliver seamless matching of reported music against their vast repository of 17.3 million Korean music tracks.

Steve Choi, Secretary General of BROMIS, said the partnership is a game-changer for the music industry in South Korea, stating that “The sharing of clear, transparent, and granular data from a neutral source represents a significant step towards making the music industry a more equitable environment. With the consistent accuracy and reliability of their reporting, Soundmouse by Orfium stood out as the best partner for the project. The quality and accuracy of their reporting processes will be game changing for our industry and will have a positive impact on the remuneration of creators and rights holders as well as the development of our wider industry ecosystem.”

The impact of this collaboration extends beyond mere technological advancements though. South Korean collecting societies will now be able to leverage Soundmouse by Orfium’s reports to inform royalty distributions to their members, including songwriters, recording artists, phonogram producers, and rights holders. By seamlessly meeting cue sheet requirements and streamlining reporting processes, broadcasters will be able to engage in more efficient negotiations with collecting societies, fostering a healthier music ecosystem.

Rob Wells, CEO of Orfium, said, “To be selected for such a significant project underscores the quality of our technology, team, and our commitment to the music industry. We are thrilled to expand our presence in the Asian market, supporting local creators and rights holders while enhancing the transparency and accuracy of music reporting processes.”

Bonna Choi, Head of Soundmouse by Orfium Korea mirrored this sentiment, “After such a rigorous consultation and trial process, we are excited to have been selected and accredited by BROMIS to work on behalf of creators, rights holders, broadcasters and collecting societies. We will bring extensive industry experience from an expert team, the highest standards in cue sheet reporting, and the most advanced technology in audio recognition fingerprinting to strengthen the process of music reporting in such an important market.”

This exciting new deal, officially approved in January 2024, marks another significant step in Orfium’s expansion in Asia. Following successful partnerships with entities like Avex, Bandai Namco, and the Japanese Society for Rights of Authors, Composers and Publishers (JASRAC), Orfium continues to lead the charge in revolutionizing music reporting and rights management across the continent.

Be a part of the journey with Orfium in Asia

If you’re interested in our journey and want to explore opportunities with Orfium, get in touch here.

Orfium has received confirmation that all of our global operating entities have achieved ISO 27001:2022 standard certification for Information Security Management Systems (ISMS).

The accreditation is an important marker for us at Orfium and our client base, providing important assurance on the robustness of Orfium’s information security policies.

Introduced in 2005 by the International Organization for Standardization and the International Electrotechnical Commission, ISO/IEC 27001 stands as an international benchmark for effective information security management.

This standard offers comprehensive guidance for the establishment, implementation, maintenance and continual improvement of an Information Security Management System (ISMS), outlining the essential requirements that an ISMS must meet.

The adoption of the ISO/IEC 27001 standard certification brings Orfium several benefits, including:

- Risk Management: Identification of information security risks to mitigate vulnerability to cyber-attacks. Preparation of people, processes, and technology across the organization to address potential risks.

- Enhanced Security Measures: Promotion of robust security controls and measures.

- Compliance and Legal Alignment: Support in meeting regulatory requirements related to information security, critical for sensitive data such as financial statements, intellectual property information, or employee data.

- Business Continuity Enhancement: Establishment of protocols for incident management.

- Continual Improvement: Regular reviews and enhancements to the ISMS, fostering a culture of ongoing improvement in security practices.

Having ISO 27001 compliance is an important milestone for Orfium. It assures all our clients that we have robust information security policies in place for all of Orfium’s global operating companies.

Our commitment to customers worldwide is to guarantee that the data they share with us is safe and that we will continually evolve our processes to ensure ongoing compliance with international security practices.

Michael Petychakis, CTO at Orfium

About the IISO/IEC 27001 standard

ISO/IEC 27001 is the world’s best-known standard for information security management systems (ISMS).

The ISO/IEC 27001 standard provides companies of any size and from all sectors of activity with guidance for establishing, implementing, maintaining and continually improving an information security management system.

Conformity with ISO/IEC 27001 means that an organization or business has put in place a system to manage risks related to the security of data owned or handled by the company, and that this system respects all the best practices and principles enshrined in this International Standard.

For more information visit: https://www.iso.org/standard/27001

Building upon Orfium’s recent partnerships with Japanese entertainment giants Avex and Bandai Namco, we are thrilled to announce our latest collaboration with JASRAC, Japan’s largest collective management organization (CMO). Through this exciting venture, our AI-powered technology services will enhance YouTube revenues for JASRAC’s extensive network of over 20,000 members, including talented songwriters, composers, and publishers.

Japanese music is enjoying a global surge in popularity, thanks in part to its remarkable growth on streaming and user-generated content (UGC) platforms. As a result, JASRAC’s members catalogs are gaining unprecedented exposure and appreciation worldwide. In recent years, the Japanese Performing Rights Organization (PRO) has been actively exploring avenues to improve remuneration for its valuable community.

JASRAC’s collaborative efforts with the Music Publishers Association of Japan

In recent years, JASRAC has been actively seeking ways to improve financial returns for its community amidst the growth of Japanese music. In collaboration with the Music Publishers Association of Japan, it has been involved in investigating fingerprint technology since 2017. This endeavor led to the implementation of music recognition devices in retail outlets in 2021.

Orfium’s support for UGC revenue streams

Global recognition of JASRAC’s catalog, combined with Orfium’s expertise has the potential to significantly enhance revenue for JASRAC’s members.

By enabling revenue claiming across UGC platforms, we strive to ensure that Japanese creators and rights holders can fully capitalize on this new revenue stream and maximize the success of their music on a global scale.

Be a part of the journey with Orfium Japan

If you’re interested in our journey and want to explore opportunities with Orfium, get in touch here.

Just weeks after our exciting deal with the Japanese entertainment conglomerate Avex, we are thrilled to announce another significant partnership, this time with Bandai Namco Music Live, a key player in Japanese Anime music. This marks a major milestone in our expansion into Japan and entry into the thriving anime music industry.

As Bandai Namco Music Live’s rights management partner on YouTube, we will leverage our AI technology to oversee, optimize, and monetize their extensive catalog on the platform.

Introducing Bandai Namco Music Live

Bandai Namco Music Live, a leading player in the Japanese anime music industry, has an extensive collection of songs and represents over 3,000 talented creators. They have contributed to numerous beloved entertainment moments, from the popular Love Live! series to the action-packed One-Punch Man.

As the popularity of digital platforms such as YouTube continues to skyrocket, the global reach of anime is also increasing. Recognizing this opportunity, Bandai Namco Music Live chose to partner with Orfium for its superior technology, which optimizes the management and monetization of music used in UGC which will enable them to continually invest into new productions and reach more fans.

As Akiho Matsuo, Rights Department General Manager at Bandai Namco Music Live, put it, “By partnering with Orfium, whose superior technology allows for the management and monetization of music used in user generated content, we can ensure that artists and creators make their music available on a popular global platform and one that, importantly, is also optimized for monetization.”

The Rising Tide of UGC in Japan’s Booming Music Market

Our partnership with Bandai Namco Music Lives sees Orfium move into anime music, a genre with its roots firmly in Japan but which has grown in popularity around the world as anime (short for animation) has become a global phenomenon. According to research by Morgan Stanley, the market reached a value of US$ 25 billion in 2023 and is expected to grow to US $ 35 billion by 2030. Japan is the major contributor in the anime market, accounting for over 40% of the global anime market in 2020.

This growth provides a perfect opportunity for those in the entertainment and music industry to utilize new solutions to capitalize on international growth and fan appetite – a natural alignment with Orfium’s expansion into the Japanese and Asian markets. Leveraging our market-leading AI-powered technology, Orfium aims to help clients optimize and monetize user-generated content (UGC) in this rapidly growing market.

The Path Ahead: Future Opportunities for Orfium in Japan

Japan has held a pivotal position in Orfium’s global expansion strategy since 2022 when we acquired Breaker and established Orfium Japan, led by Alan Swarts. This latest deal builds on our partnerships with Avex, Warner Music Japan, and Victor Entertainment, highlighting our commitment to driving global digital transformation through local collaborations.

With the strength and expertise of our dedicated local team and the latest in cutting edge AI-powered technology, we are excited to continue forging more partnerships in Japan and across Asia’s dynamic music and entertainment landscape to power revenues for rights holders and creators across digital music and broadcast platforms.

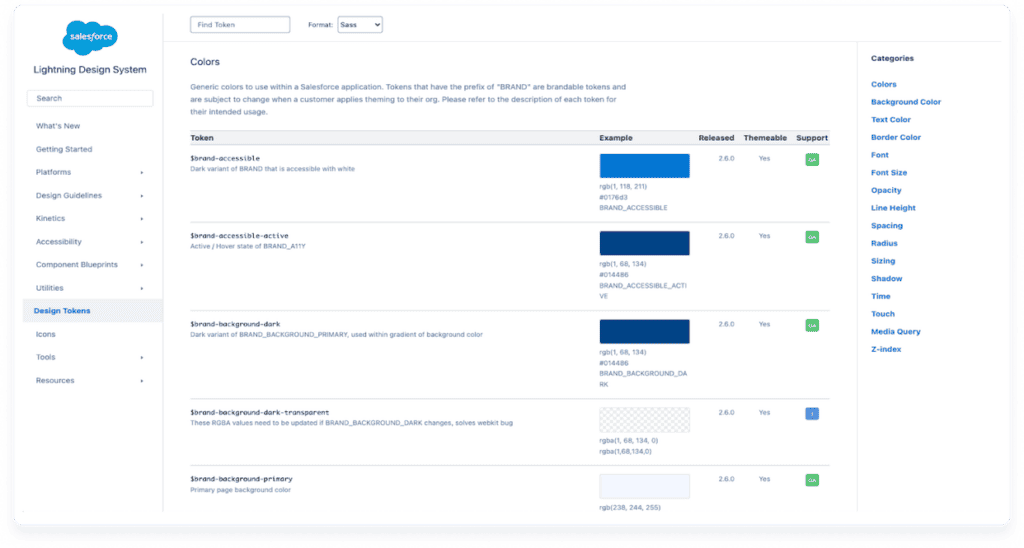

As of the writing of this article, the W3C Design Tokens Standard is still in development. Its goal is to establish a universal framework for using tokens that will be compatible with any tool or platform. This has the potential to be a game-changer and is worth keeping an eye on.

If you want to integrate design tokens into your design system but don’t quite know where to begin, you’re not alone. Adding design tokens to an existing system calls for good organization and consistent use. This already challenging task is not made any easier by the lack of standard naming conventions.

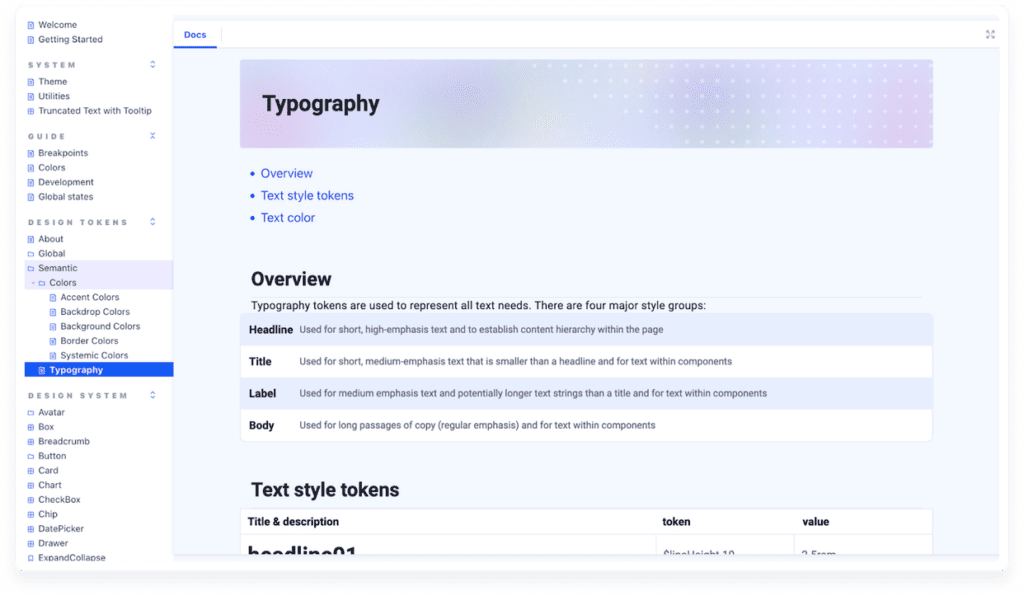

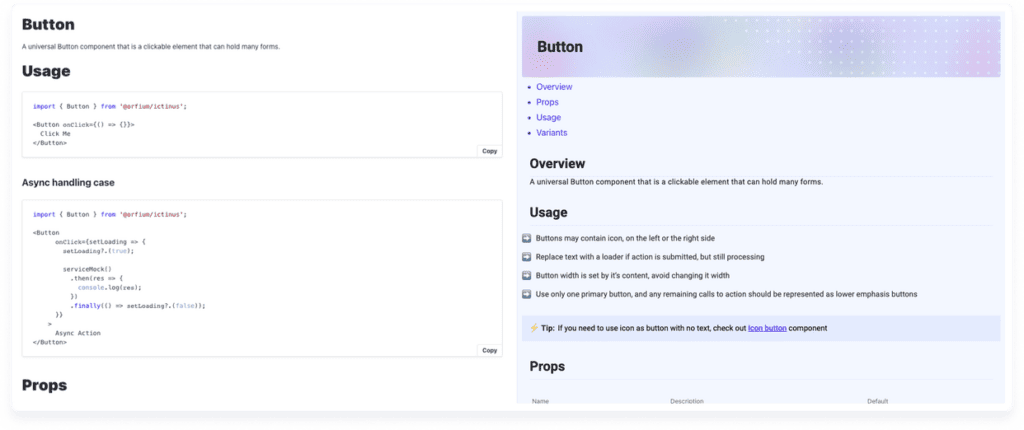

In this article, we’ll delve into how our team integrated design tokens into our design system, Ictinus. We’ll discuss the challenges we encountered, the solutions we came up with, and the impact design tokens had on our workflow. Finally, we’ll share insights gained from our experience.

What are design tokens?

A design token is a piece of data that encapsulates a single design decision.

The term ‘design token’ was first coined by Jina Anne at Salesforce in 2014. They can represent a wide range of properties and link (‘alias’) to other tokens, making them scalable by nature.

Sound familiar? It’s similar to how programming variables work: you declare a specific value which is then propagated to many different instances. These values and relationships can be represented in JSON format.

What are the benefits of design tokens?

In our preliminary research, we analyzed the approach of other Design Systems (e.g. Polaris, Carbon, etc.) and read up on token literature and best practices. Our early findings were enough to convince our team: tokens were worth the time, cost and effort of integration. Our biggest challenges at the time were gaps in communication and insufficient change tracking. Tokens promised to help with these issues as well as do the following:

1. Resolve gaps in communication

Design decisions often got ‘lost in translation’ due to inadequate documentation and time pressure. Discrepancies between design and implementation were not just common but expected. This translated to much more time and effort spent in QA – and still, mistakes could slip through.

2. Fix issues with change tracking

This gap in communication had a ripple effect. Changes in design documentation were not always reflected in current implementation. That made it hard to confirm whether a specific section of documentation was updated or not. When should we implement QA?

As a result, we had to freeze our design system to a specific version (V4); a last resort solution that opened a whole new can of worms. Last but not least, our current workflow all but ensured that the team ran at different speeds.

The more we learned about tokens, the more they seemed like a winning bet. They would help us resolve some of our biggest issues, become more efficient and enrich our design language.

3. Improve efficiency

Before Figma (our tool of choice) introduced variables, there was no way to natively link styles. Design tokens would allow us to mass-update multiple styles by editing a single aliased token. This would simplify maintenance and reduce cognitive load, allowing the team to focus on the bigger picture instead.

4. Extensive customization support

Design tokens can represent a wide range of properties beyond the usual color, typography, and layer effect styles. Tokens also support the following:

- Spacing

- Sizing

- Border radius

- Border width

- Animation styles

The challenges of implementing design tokens

It was not all sunshine and rainbows though. As mentioned before, a standardized framework for creating and using design tokens doesn’t exist just yet. In this article, Brian Heston addresses the most common design token implementation concerns.

Of special note is the mention that there is no common standard for naming and applying tokens. This lack of consistency poses challenges when combining components from different libraries. It also makes it hard to outline and follow a common set of best practices.

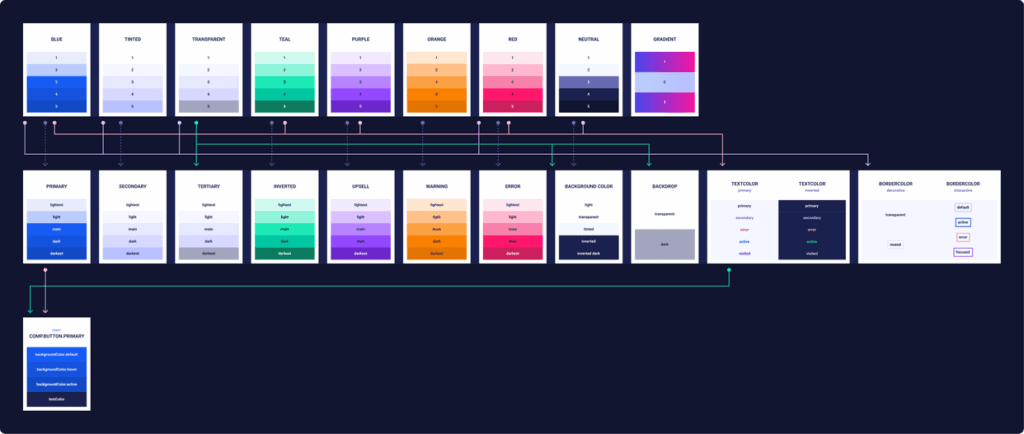

What token tiers are, and how we used them (aka layers, groups or sets…)

Before diving into token creation, we first needed to lay down the basics. What would we call different token levels, for example? And how many levels would we need?

Tokens can be organized into different levels of abstraction. Common names for different levels include ‘levels’, ‘types’, ‘tiers’, ‘layers’, ‘groups’, and ‘sets’. These levels help organize tokens based on their purpose, scope, or usage. We ended up going with ‘tiers’, as it was both the most unique-sounding and descriptive.

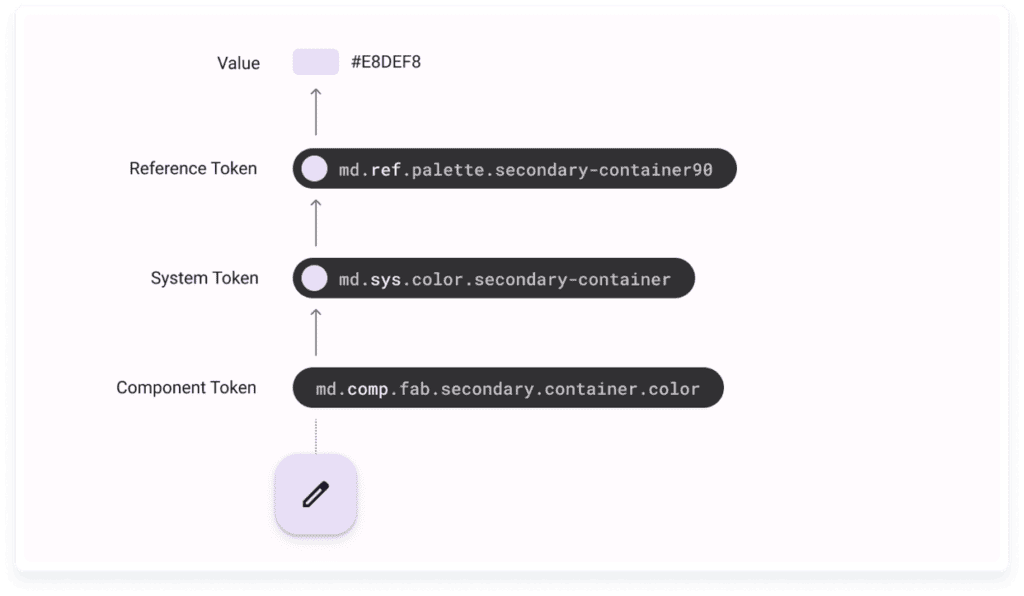

Naming settled, we shifted our focus to tier structure. How many tiers should we have? What should each tier contain? How would it relate to other tiers?

In the many faces of themeable design systems, Brad Frost suggests a three-tiered model that uses a vertical hierarchy.

Tier-1, the lowest tier, contains tokens that encapsulate raw visual design materials.

Tier-2 tokens are contextual, and link Tier-1 tokens to high-level usage.

Finally, Tier-3 tokens are specific to components and link to Tier-2 tokens.

How we named our tiers

We settled on the name ‘global’ for Tier-1. It would contain every single tokenized value in our design system. ‘blue.3’, a token containing a single color value with no context, is an example of a ‘global’ tier.

Tier-2 would draw from ‘global’ to create contextual tokens. For example, ‘blue.3’, a global token, may link to the ‘sem.textColor.active’ semantic token to create a token describing text color for an ‘active’ state. We named this tier ‘semantic’ (‘sem’ for short).

You can think of Tier-2, the ‘semantic’ layer, as one of your theme layers. If you want to be able to switch to dark mode down the line, all you need is identical ‘semantic’ layers that point to different aliases.

Finally, Tier-3 became ‘component’ (abbreviated to ‘comp’). This final tier would link to Tier-2, drawing contextual tokens in component-specific combinations.

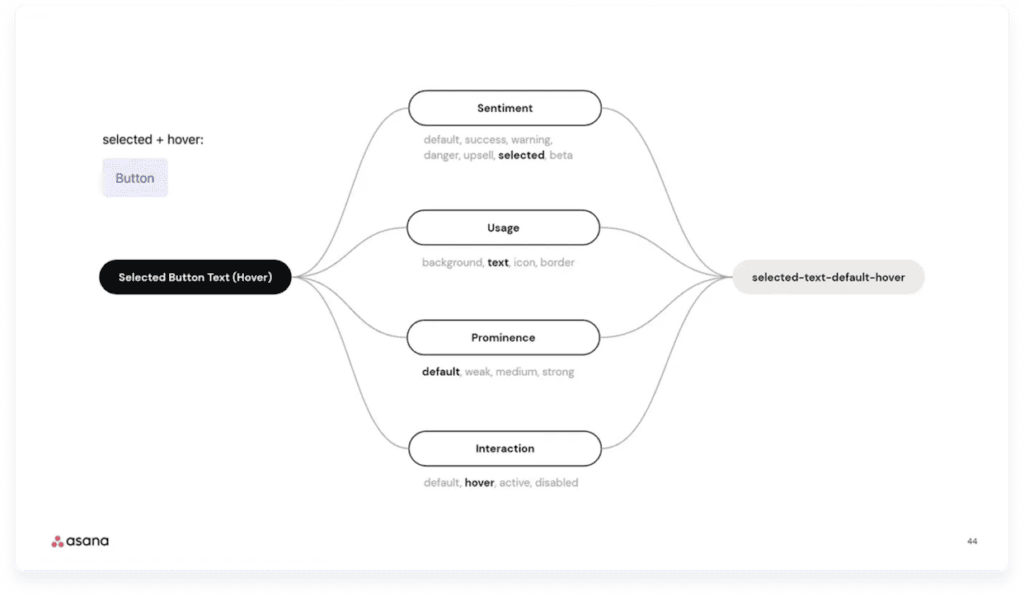

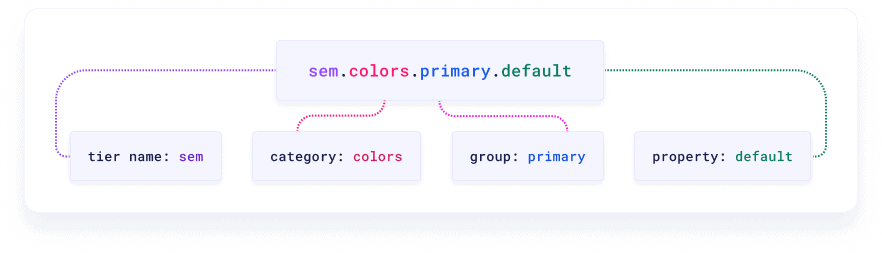

Token name conventions and semantic attributes

With the tiers in place, it was finally time to think about token name structure. What should we include in a design token name, and in what order? This well-known, oft-referenced talk by the Asana team on Schema 2021 served as a great starting point. The whole video is worth a watch, but of special interest is the hierarchical naming convention the Asana team uses to name design tokens.

Inspired by Asana and mindful of HTML/CSS standards, we created our own set of naming rules as follows:

- A token name is composed of the following semantic attributes: tier.category.property.state

- Tier: Indicates the tier the token can be found in.

- The ‘Global’ tier is omitted from the token name for the sake of simplicity.

- Tokens found in the ‘semantic’ tier start with ‘sem.’

- Tokens found in the ‘component’ layer begin with ‘comp.’

- Category: Chooses a general token category (e.g. ‘spacing’). When used in a ‘comp’ token, ‘category’ displays the component name.

- Group: provides context on a given category (e.g. ‘background’ as a subcategory to ‘color’).

- Property: Contextual value of token. May also be state or variant. May also reference alternative visual variants for non-interactive components.

- Tier: Indicates the tier the token can be found in.

- Semantic attributes are separated by period (.)

- Token names that include more than one word are formatted in camelCase.

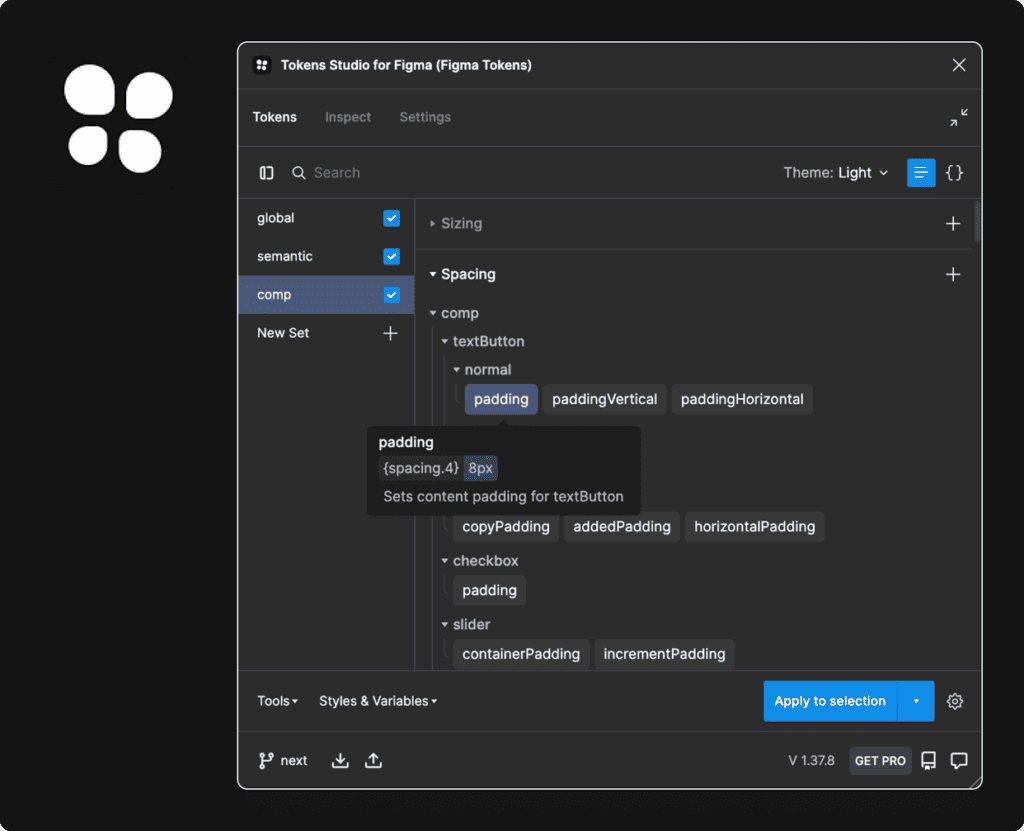

How we use Tokens Studio for Figma (our not so secret weapon)

When we were implementing design tokens, Figma didn’t offer support for token creation as variables had not been released yet. Even now, variables do not support properties like border width (and, as of the writing of this article, cannot be exported in JSON without a plugin). This meant we would need to use a third-party tool. We chose Tokens Studio, a popular choice that we could easily integrate into our Figma workflow.

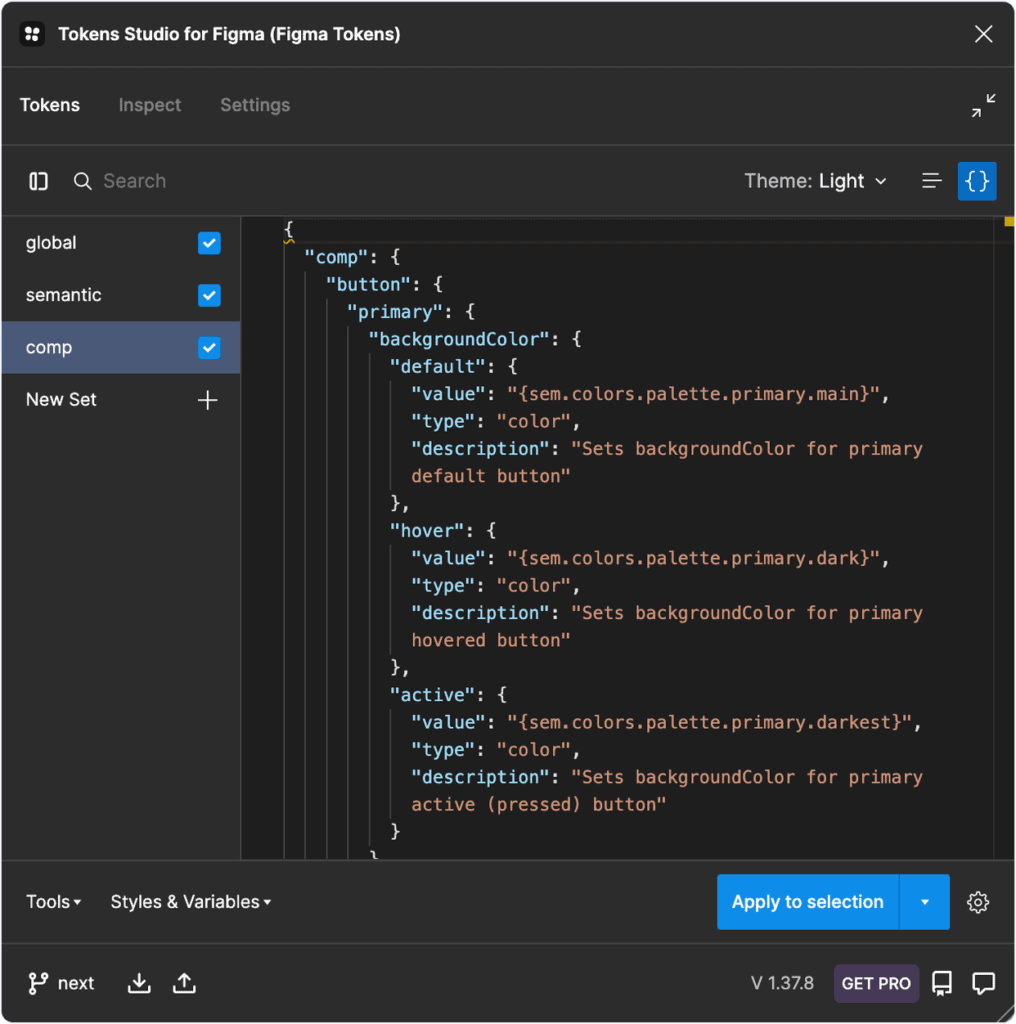

Tokens Studio for Figma represents tokens in JSON as a list of objects. You can create and edit tokens either via the user interface provided by the plugin or by directly editing the JSON code.

The ability to store the generated tokens as JSON in GitHub branches was a game-changer for our team. It allowed development to review design work as it was being done. Upon approval, typescript code would be auto-generated from said JSON. Finally, small issues were addressed and corrected in a fraction of the usual time. This was a big step towards bridging the aforementioned communication gap.

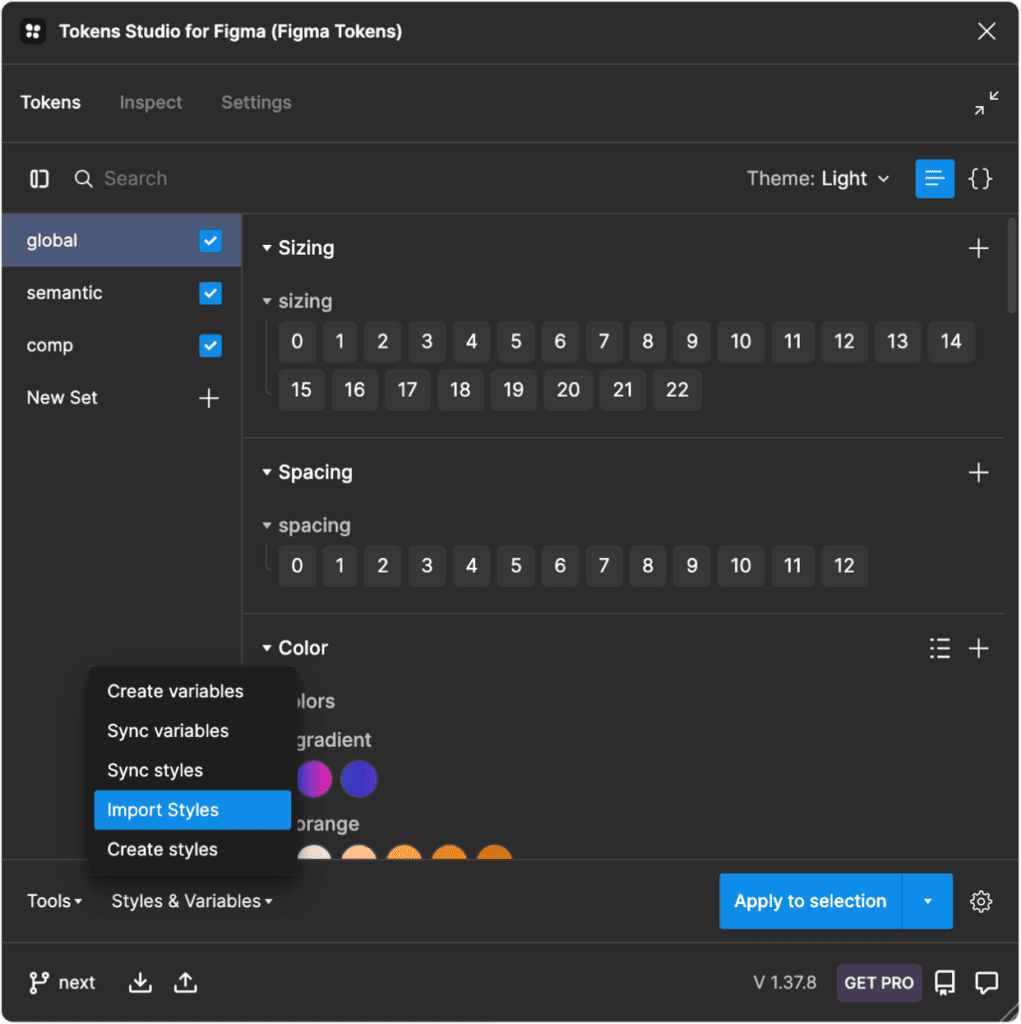

Importing global tier tokens

Tokens Studio allows you to import existing Figma styles and convert them into tokens; this saved us a lot of time. After we imported our preexisting color, typography, and layer effect styles into Tokens Studio, it was time for the good stuff. We created extra categories such as spacing, sizing, and border radius.

Semantic tier tokens

It was then time to begin work on the ‘semantic’ tier tokens. These are tokens with contextual meaning that link to context-less ‘global’ tokens. Semantic style examples include text color, typography styles, icon sizes, and interaction palettes. These styles are then combined in many different ways to make the final tier, ‘comp’.

Component design tier tokens

The component tier is the final layer (Tier-3). Each token created in this tier links to a ‘sem’ token. This allows for easy tracking of individual component tokens while still maintaining scalability.

The ‘category’ semantic attribute for ‘Component’ tier tokens is always the component name. This level of granularity allows for better control when working with large design systems.

Here’s an example of a ‘comp’ token:

comp.button.compact.iconSize

- ‘comp’: indicates ‘component’ token tier

- ‘button’: the component’s name

- ‘compact’: applies to compact button

- ‘iconSize’: sets icon size property

Results and Impact

The adoption and utilization of design tokens significantly enhanced our team’s workflow. Design tokens helped our team create a common language spoken by all and allowed for a unified mindset. This fostered a better understanding between designers and developers regarding each other’s constraints. It also led to significant delivery time and quality improvements.

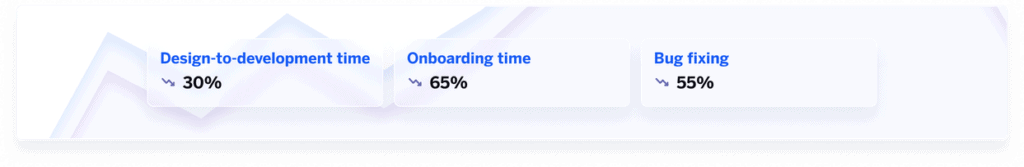

Internal design-to-development average time was reduced by roughly 30%. This has a lot to do with the fact that token structure is similar for both designers and front-end developers. As a result, understanding them and applying them correctly became a more intuitive process for both disciplines. This was also a big help towards streamlining communication and reducing excess noise.

It reduced visual discrepancies between design and implementation. As a result, time normally spent on QA was instead used to better develop the components and pad the documentation. This affected onboarding of users and products to the design system, speeding up the process by approximately 65%. This allowed us to create better, more thorough documentation for component behavior and patterns. This led to a sharp decline in bug fix tickets (to the tune of approximately 55%).

The team’s upcoming steps involve introducing the highly-anticipated theming to ORFIUM products. Our previous framework made this a pipe dream, but with tokens, sky’s the limit!

Takeaways

Here are some practical tips to help your team adopt and use design tokens:

- Define a clear tier structure that your team can understand at a glance and use with ease. Each tier should have a distinct name, abbreviation, and purpose.

- Create naming conventions for your design tokens as a team. Make sure the naming conventions are easy to remember and use.

- Document everything token related. Documentation should be accessible and understandable by both design and development team members.

- Simplify token creation and export with third party tools like Tokens Studio for Figma.

That’s all for now, folks! We encourage you to share with us your own findings and concerns at @LifeatOrfium.

To learn more about design tokens, check out Jina Anne’s 2021 YouTube video or read the video summary by Amy Lee.

References

https://uxdesign.cc/naming-design-tokens-9454818ed7cb

https://medium.com/@amster/wtf-are-design-tokens-9706d5c99379

https://medium.com/fast-design/evolution-of-design-tokens-and-component-styling-part-1-f1491ad1120e

The Many Faces of Themeable Design Systems

https://medium.muz.li/unlocking-the-power-of-design-tokens-to-create-dark-mode-ui-18c0802b094e

We’re excited to share news of our latest partnership with Avex in Japan. Avex is a powerhouse in the world of entertainment and this partnership signals a significant step in Orfium’s growth as the first deal of its kind in Asia.

Japan is the world’s second largest music market, yet UGC monetization is still in its infancy. Recognising the opportunity to support the market there, Orfium expanded to Japan in 2022 with the acquisition of Breaker (now Orfium Japan) and has been growing solidly in the market since then.

Orfium is set to become Avex’s partner for music catalog management on YouTube, utilizing our AI-based matching technology to maximize revenue for Avex’s rights holders, artists, writers, and composers.

Who is Avex?

Picture a goliath in the world of entertainment, a company that weaves music, animation, film, and digital prowess into the iconic music landscape in Japan. The sounds behind a whole world of anime, filmmaking, Japan’s iconic and thriving DJ scene and so much more. That’s Avex Inc.

Founded in 1988, they started in the music and entertainment industry as a wholesale business of imported music records. They’ve since expanded to be a powerhouse in record labels, live music performances, animation, and filmmaking.

Avex’s mission is most aptly put by Avex’s own Representative Director and CEO Katsumi Kuroiwa, who says they are “Making impossible entertainment, possible.”, and they have been a pioneer in doing just that. Projects like Justin Bieber’s world tour to Japan, and the first fully remotely animated film ‘She Sings, As If It Were Destiny’ are just two shining examples of Avex’s innovation that proves that they are a driving force and strong representation of the desire in the Japanese market to consume and contribute to the music and entertainment industry.

The Growth of UGC & the Japanese Music Market

Ranking as YouTube’s third-largest revenue contributor, Japan’s digital music scene is flourishing. Although YouTube thrives with 70 million monthly active users, UGC monetization is just taking flight, with many Japanese music companies only now transitioning from restrictions to embracing the vast potential of sharing and licensing.

In 2022, despite YouTube’s global payout of over $6 billion, Japan received a modest 6% – showing clearly the opportunity for artists and rights holders to gain more for their art. There’s a huge opportunity here to fill the gaps by many platforms’ own services for tracking and monetizing music which leave potentially millions of dollars worth of revenue unfound and unclaimed – and as more music is shared, that opportunity is only growing.

Having already established ourselves as the leader in other major markets for UGC rights management and monetization, Orfium is excited to be a part of reclaiming those missed revenue opportunities by partnering with more Japanese clients, and supporting the unique industry there. We already work with the world’s leading Music Publishers, Record Labels, Broadcasters and Production Music Companies in the US and UK, and have delivered unrivaled results for them; and we’re excited to demonstrate the same value for the Japanese and Asian markets.

Our local team, led by the experienced and passionate Alan Swarts, has been instrumental in our swift rise in the Japanese market – behind every great achievement, is a dedicated and skilled team, and Orfium Japan is no exception. With their deep industry knowledge and commitment, they’ve ensured that Orfium Japan is positioned to deliver the full potential and degree of success we’ve seen around the world for our clients.

Looking Ahead: What Lies Beyond the Horizon

Orfium’s partnership with Avex follows agreements with Warner Music Japan and Victor Entertainment, and we’re looking forward to sharing news of more Japanese partner deals very soon. Driven by our company goal to deliver optimized UGC revenue generation, we are continuing to improve the industry for the better for creators, artists and rights owners, one partnership at a time. As we grow internationally, we look forward to more partnerships across the music and entertainment landscape of Japan and Asia

Be a Part of the Journey with Orfium Japan

If you’re intrigued by our journey and want to explore opportunities with Orfium, don’t hesitate to reach out to our team.

We’re delighted to announce Orfium’s official sponsorship of All That Matters 2023!

As Orfium rolls out our full suite of tools and services into the Japanese and Asian markets, we are proud to announce our entry into the region with the confidence and clientele of some of the largest broadcasters, video game publishers and music publishers already.

With a global team of over 600 specialists, including dedicated teams in Taiwan, Japan, and South Korea, we have already established ourselves as a major player in solving key challenges in catalog and rights management in the entertainment industry. Our participation in the All That Matters event, which aligns perfectly with our core vision of driving innovation in the entertainment industry, provides us with the perfect platform to introduce our innovative solutions and to network with other professionals in the region.

Our sponsorship of the event reflects our unwavering dedication to driving innovation in the industry. We are excited to connect with other professionals, artists, and visionaries in the industry to explore the latest trends and innovations shaping the future.

About All That Matters 2023

The event blends business insights with fan enthusiasm and will take place in Singapore from September 11-13, 2023. This annual gathering has become a highlight on the industry calendar where the entertainment, media, and technology professionals come together to focus on current and future challenges, opportunities and innovation. An impressive lineup of professionals, artists, experts, and visionaries will come together to explore the latest trends and innovations shaping the future of these dynamic fields.

What’s in Store

The agenda is filled with an engaging array of keynotes, interactive panels, workshops, music showcases, and networking opportunities that are designed to foster the exchange of knowledge and ideas. This event is a platform to uncover the ever-evolving trends, challenges, and opportunities in music, gaming, sports, marketing, and beyond.

The Orfium team will be there, including Alan Swarts, CEO of Orfium Japan, Bonna Choi, Head of Soundmouse by Orfium Korea, John Possman, Director of Orfium Japan, and Shiro Hosojima, Chief Operations Officer in Japan.

Orfium to Discuss Online Media Monetization

Orfium will participate in a panel discussion on online media monetization at All That Matters 2023. The panel, titled “Online Media Matters: A Comprehensive Guide for Content Owners and Creators,” will be held on Tuesday, September 12, 2023, at 2:20 PM – 2:50 PM GMT+8.

Alan Swarts, CEO of Orfium Japan, will be one of the panelists and will share his insights on the latest growth monetization strategies for content owners and creators in the Asia Pacific region. Some of the topics to be discussed will include different revenue streams for content owners, from ad revenue to brand collaborations, as well as legal considerations and future trends in online media monetization. Alan will be joined on stage by other panelists including Hao Zhang, Head of Western Content Partnerships & Licensing at Tencent Music Entertainment Group (TME), and Reta Lee, Head of Commerce at Yahoo.

Orfium’s Vision and Contribution

Orfium’s partnership with All That Matters perfectly aligns with our core mission: driving innovation in entertainment through technology. We offer a suite of services that empower music creators, labels, broadcasters, and more to effortlessly manage and monetize their content. Our involvement in this event is a testament to our dedication to forward-thinking discussions, industry insights, and collaborative endeavors.

Shaping the Future Together with All That Matters 2023

This sponsorship showcases our ongoing commitment to nurturing growth, creativity, and progress in music and entertainment. We’re confident that All That Matters will serve as an invaluable platform for forging connections, sparking fresh ideas, and advancing our collective understanding of this ever-evolving landscape.

A Special Thanks

Our heartfelt gratitude goes out to the organizers and participants who have crafted an environment that encourages exploration, collaboration, and insightful conversations. We’re eagerly looking forward to contributing to the event’s success and engaging in meaningful exchanges that will undoubtedly shape the future of entertainment and technology.

For more details about the event, including registration information and the schedule, please visit the official All That Matters website.

Come Meet us at All That Matters 2023!

If you’re curious to learn more about Orfium and how we’re using machine learning, AI, and innovative algorithms to streamline and boost client revenue streams, feel free to drop by our Orfium VIP Lounge at the event, and leave your details here.

We’re back

As described in a previous blog post from ORFIUM’s Business Intelligence team, the set of tools and software used varied as time progressed. A shorter list of software was used when the team was two people strong, a much longer and more sophisticated one is being currently used.

2018-2020

As described previously, the two people in the BI team were handling multiple types of requests from various customers from within the company and from ORFIUM’s customers. For the most part, they were dealing with Data Visualization, as well as a small part of data engineering and some data analysis.

Since the team was just the two of them, tasks were more or less divided in engineering and analysis vs visualization. It is simple to guess that in order to combine data from Amazon Athena and Google Spreadsheets or ad-hoc csv’s, a lot of scripting was used in Python. Data were retrieved from these various sources and after some (complex more often than not) transformations and calculations the final deliverables were some csv’s to either send to customers, or load on a Google Sheet. In the latter case a simple pivot table was also bundled in the deliverable in order to jump start any further analysis from the, usually internal, customer.

In other cases where the customer was requesting graphs or a whole dashboard, the BI team was just using Amazon Athena’s SQL editor to run the exploratory analysis, and when the proper dataset for analysis was eventually discovered, we were saving the results to a separate SCHEMA_DATASET in Athena itself. The goal behind that approach was that we could make use of Amazon’s internal integration of their tools, so we provided our solutions into Amazon Quicksight. This seemed at that moment the decision that would provide deliverables in the fastest way, but not the most beautiful or the most scalable.

Quicksight offers a very good integration with Athena due to both being under the Amazon umbrella. To be completely honest, at that point in time the BI Analyst’s working experience was not optimal. From the consumer side, the visuals were efficient but not too beautiful to look at, and from ORFIUM’s perspective a number of full AWS accounts was needed to share our dashboards externally, which created additional cost.

This process slightly changed when we decided to evaluate Tableau as our go-to-solution for the Data Visualization process. One of the two BI members at that time leaned pretty favorably towards Tableau, so they decided to pitch it. Through an adoption proposal for Tableau, which was eventually approved by ORFIUM’s finance department, Tableau came into our quiver. Tableau soon became our main tool of choice for Data Visualization. It allows better and more educated decisions to be made from management, and is able to showcase the value that our company can offer to our current and potential future clients.

This part of BI’s evolution led to the deprecation of both Quicksight and python usage, as pure SQL queries and DML were developed in order to create tables within Athena, and some custom SQL queries were embedded on the Tableau connection with the Data Warehouse. We focused on uploading ad-hoc csv’s or data from GSheets on Athena, and from there the almighty SQL took over.

2021- 2022

The team eventually grew larger and more structured, and the company’s data vision shifted towards Data Mesh. Inevitably, we needed a new and extended set of software.

A huge initiative to migrate our whole data warehouse from Amazon Athena to Snowflake started, with BI’s main data sources playing the role of the early adopters. The YouTube reports were the first to be migrated, and shortly after the Billing reports were created in Snowflake. That was it, the road was open and well paved for the Business Intelligence team to start using the vast resources of Snowflake and start building the BI-layer.

A small project of code migration so that we use the proper source and create the same tables that Tableau was expecting from us, turned into a large project of restructuring fully the way we worked. In the past, the python code used for data manipulation and the SQL queries for the creation of the datasets to visualize were stored respectively in local Jupyter notebooks and either within View definitions in Athena or Tableau Data source connections. There was no version control; there was a Github repo but it was mainly used as a code storage for ad-hoc requests, with limited focus on keeping it up to date, or explaining the reasoning of updates. There were no feature branches, and almost all new commits on the main one were adding new ad-hoc files in the root folder and using the default commit message. This situation, despite being a clear pain point of the team’s efficiency, emerged as a huge opportunity to scrap everything, and start working properly.

We set up a working guide for our Analysts: training on usage of git and Github, working with branches, PullRequest templates, commit message guidelines, SQL formatting standards, all deriving from the concept of having an internal Staff Engineer. We started calling the role Staff BI Analyst, and we indeed currently have one person setting the team’s technical direction. We’ll discuss this role further in a future blog post.

At the same time we were exploring options on how to combine tools so that the BI Analysts are able to focus on writing proper and efficient SQL queries, without having to either be fully dependent on Data Engineers for building the infrastructure for data flows, or requiring python knowledge in order to create complex DAGs. dbt and Airflow surfaced from our research and, frankly, the overall hype, so we decided to go with the combination of the two.

Initially the idea was to just use Airflow, where an elegant loop would be written so that the dags folder would be scanned, and using folder structures and naming conventions on sql files, only a SnowflakeOperator would be needed to transform a subfolder in the dags folder to a DAG on the AirflowUI, with each file from the folder would be a SnowflakeOperator task, and the dependencies would be handled by the naming convention of the files. So, practically a folder structure as the one shown to the right would automatically create a Dynamic Dag as shown on the left.

No extra python knowledge needed, no Data Engineers needed, just store the proper files with proper names. A brief experimentation with DAGfactory was also implemented but we soon realized that the airflow should just be used as the orchestrator of the Analytics tasks, and the whole analytics logic should be handled by something else. All this was very soon abandoned when dbt was fully onboarded to our stack.

Anyone who works in the Data and Analytics field must have heard of dbt. And if they haven’t already, they should. This is why there is nothing too innovative to describe about our dbt usage. We started using dbt from early on in its development, having first installed v0.19.1, and with an initial setup period with our Data Engineers, we combined Airflow with dbt-cloud for our production data flows, and core dbt CLI for our local development. Soon after that, and in some of our repos, we started using github actions in order to schedule and automate runs of our Data products.

All of the BI Analysts in our team are now expected to attend all courses from the Learn Analytics Engineering with dbt program offered at dbt Learn regarding its usage. The dbt Analytics Engineering Certification Exam remains optional. However, we are all fluent with using the documentation and the slack community. Generic tests dynamically created through yml, alerts in our instant messaging app in case of any DAG fails, and snapshots are just some of the features we have developed to help the team. As mentioned also above, our Staff BI Analyst plays a leading role in creating this culture of excellence.

There it was. We endorsed the Analytics engineering mindset, we reversed the ETL and implemented ELT, thus finally decoupling the absolute dependency on Data Engineers. It was time to enjoy the fruits of Data Mesh: Data Discovery and Self Service Analytics.

2023-beyond

Having more or less implemented almost all of ORFIUM’s Data Products on Snowflake with proper documentation we just needed to proceed to the long awaited data democratization. Two key pillars of democratizing data is to make them discoverable and available for analysis by non-BI Analysts too.

Data Discovery

As DataMesh principles dictate, each data product should be discoverable by its potential consumers, so we also needed to find a technical way to make that possible.

We first needed to ensure that data were discoverable. For this, we started testing out some tools for data discovery. Among the ones tested was Select Star Select Star,, which turned out to be our final choice. During the period of trying to find the proper tool for our situation, Select Star was still early in its evolution and development so, after realizing our sincere interest, they invested in building a strong relationship with us, consulting us closely when building their roadmaps, while having a very frequent communication looking to get our feedback as soon as possible. The CEO herself Shinji Kim was attending our weekly call helping us make not just our data discoverable to our users, but the tool itself easily used by our users in order to increase adoption.

Select Star offered most of the features we knew we wanted at that time, and it offered a quite attractive pricing plan which went in line with our ROI expectations.

Now, more than a year after our first implementation, we have almost 100 users active on Select Star, which is a pretty part of the internal Data consumer base within ORFIUM, given that we have a quite large operations department of people who do not need to access data or metadata.

We are looking to make it the primary gateway of our users to our data. All analysis, even thoughts, should start by using Select Star to explore if data exist.

Now, data discovery is one thing, and documentation coverage is another thing. There’s no point in making it easy for everyone to search for table and column names. We need to add metadata on our tables and columns so that the search results of Select Star parse that content too, and provide all available info to seekers. Working in this direction we have established within the Definition of Done of a new table in the production environment a clause that there should be documentation on the table and the columns too. Documentation on the table should include not only technical stuff like primary and foreign keys, level of granularity, expected update frequency etc, but also business information like what is the source for the dataset, as this varies between internal and external producers. Column documentation is expected to include expected values, data types and formats, but also business logic and insight.

The Business Intelligence team uses pre-commit hooks in order to ensure that all produced tables contain descriptions for all the columns and the tables themselves, but we cannot always be sure of what is going on in other Data products. As Data culture ambassadors (more on that on a separate post too), BI has set up a data coverage monitoring dashboard, in order to quantify the Docs coverage of tables produced by other Products, raising alerts when the coverage percentage falls below the pre-agreed threshold.

Tags and Business and Technical owners are also implemented through Select Star, making it seamless for data seekers to ask questions and start discussions on the tables with the most relevant people available to help.

Self-Service Analytics

The whole Self-Service Analytics initiative in ORFIUM, as well as Data Governance, will be depicted in their very own blog posts. For now, let’s focus on the tools used.

Having all ORFIUM Data Products accessible on Snowflake and discoverable through Select Star, we were in position to launch the Self-Service Analytics project. A decentralization of data requests from BI was necessary in order to be able to scale, but we could not just tell our non-analysts “the data is there, knock yourself out”.

We had to decide if we wanted Self-Service Analysts to work on Tableau or if we could find a better solution for them. It is interesting to tell the story of how we evaluated the candidate BI tools, as there were quite a few on our list. We do not claim this is the only correct way to do this, but it’s our take, and we must admit that we’re proud of it.

We decided to create a BI Tool Evaluation tool. We had to outline the main pillars on which we would evaluate the candidate tools. We then anonymously voted on the importance of those pillars, averaging the weights and normalizing them. We finally reached a total of 9 pillars and 9 respective weights (summing up to 100%). The pillars list contain connectivity effectiveness, sharing effectiveness, graphing, exporting, among other factors.

These pillars were then analyzed in order to come up with small testing cases, using which we would assess the performance in each pillar, not forgetting to assign weights on these cases too, so that they sum up to 100% within each pillar. Long story short we came up with 80 points to assess each one of the BI tools.

We needed to be as impartial as possible on this, so we assigned two people from the BI team to evaluate all 5 tools involved. Each BI tool was also evaluated by 5 other people from within ORFIUM but outside BI, all of them potential Self-Service Analysts.

Coming up with 3 evaluations for each tool, averaging the scores, and then weighting them with the agreed weights, led us to an amazing Radar Graph.

Though there is a clear winner in almost all pillars, it performed very poorly in the last pillar, which contained Cost per user and Ease of Use/Learning Curve.

We decided to go for the blue line which was Metabase. We found out that it would serve >80% of current needs of Self-Service Analysts, with very low cost, and almost no code at all. In fact we decided (Data Governance had a say on this too) that we would not allow users to be able to write SQL queries on Metabase to create Graphs. We wanted people to go on the Snowflake UI to write SQL, as those people were few and SQL-experienced, as they usually were backend engineers.

We wanted Self Service Analysts to use the query editor, which simulates an adequate amount of SQL features, in order to avoid coding at all. If they got accustomed to using the query builder, then for the 80% of their needs they would have achieved this with no SQL, so the rest of the Self-Service Analysts (the even-less tech savvy) would be inspired to try it out too.

After ~10 months of usage (on the Self-Hosted Open Source version costing zero dollars per user per month, which translates to *calculator clicking * zero dollars total) we have almost 100 Monthly Active Users and over 80 Weekly Active users, and a vibrant community of Self-Service Analysts looking to get more value from the data. The greatest piece of news is that the Self-Service Analysts become more and more sophisticated in their questions. This is solid proof that, within the course of 10 months, they have greatly improved their own Data Analysis skills, and subsequently the effectiveness of their day-to-day tasks.

Within those (on average) 80WAUs, the majority is Product Owners, Business Analysts, Operations Analysts, etc., but there are also around five high level executives, including a member of the BoD.

Conclusion

The BI team and ORFIUM itself have evolved in the past few years. We started from Amazon Athena and Quicksight, and after a part of the journey with python by our side, we have established Snowflake, Airflow, dbt and Tableau as the BI stack, while adding in ORFIUM’s stack Select Star for Data Discovery and Metabase for Self-Sevice Analytics.

More info on these in upcoming posts, but we have more insights to share for the Self-Service Initiative, the Staff BI role, and the Data Culture at ORFIUM.

We are only eager to find out what the future holds for us, but at the moment we feel future-proof.